About ten years ago, as the pace of HR technology migration to the cloud started to heat up, I started to have a lot more conversations with organizations that were struggling with the challenges of planning for system migration and what to do with the data from their old systems post-migration. This became such a common conversation that it formed part of the reason for One Model coming into existence. Indeed much of the initial thought noodling was around how to support the great cloud migration that was and still is underway. In fact, I don't think this migration is ever going to end as new innovation and technology becomes available in the HR space. The pace of adoption is increasing and more money is being made than ever by the large systems implementation firms (Accenture, Deloitte, Cognizant, Rizing etc). Even what may be considered as a small migration between two like systems can cost huge amounts of money and time to complete.

One of the core challenges of people analytics has always been the breadth and complexity of the data set and how to manage and maintain this data over time.

Do this well, though, and what you have is a complete view of the data across systems that is connected and evolving with your system landscape. Why then are we not thinking in a larger context about this data infrastructure to be able to support our organizations adoption of innovation? After all, we have a perfect data store, toolset, and view of our data to facilitate migration.

The perfect people analytics infrastructure has implemented an HR Data Strategy that disconnects the concept of data ownership from the transactional system of choice.

This has been an evolving conversation but my core view is that as organizations increase their analytical capability, they will have in place a data strategy that supports the ability to choose any transactional system to manage their operations. Being able to quickly move between systems and manage legacy data with new data is key to adopting innovation and organizations that do this best will reap the benefits.

Let's take a look at a real example, but note that I am ignoring the soft skill components of how to tackle data structure mapping and the conversations required to identify business logic, etc., as this still needs human input in a larger system migration.

Using People Analytics for System Migration

Recently we were able to deploy our people analytics infrastructure with a customer to specifically support the migration of data from Taleo Business Edition to Workday's Recruiting module. While this isn't our core focus as a people analytics company, we recently completed one of the last functional pieces we needed to accomplish this, so I was excited to see what we could do.

Keep in mind that the below steps and process we worked through would be the same from your own infrastructure but One Model has some additional data management features that grease the wheels.

To support system migration we needed to be able to

- Extract from the source system (Taleo Business Edition) including irregular data (resume files)

- Understand the source and model to an intermediate common data model

- Validate all source data (metrics, quality, etc)

- Model the intermediate model to the destination target model

- Push to the destination (Workday)

- Extract from the destination and validate the data as correct or otherwise

- Infinitely and automatically repeat the above as the project requires.

Business logic to transform and align data from the source to target can be undertaken at both steps 2 and 4 depending on the requirement for the transformation. Below is the high level view of the flow for this project.

In more detail

The Source

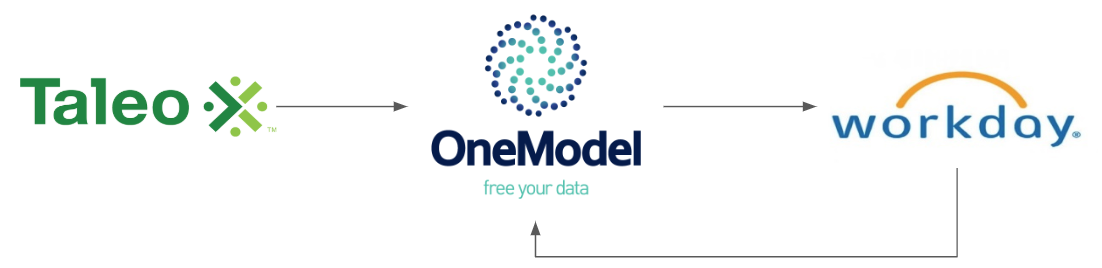

There were 132 Tables from Taleo Business Edition that form the source data set extracted from the API plus a separate the collection of resume attachments retrieved via a python program.

Luckily we already understood this source and had modeled them.

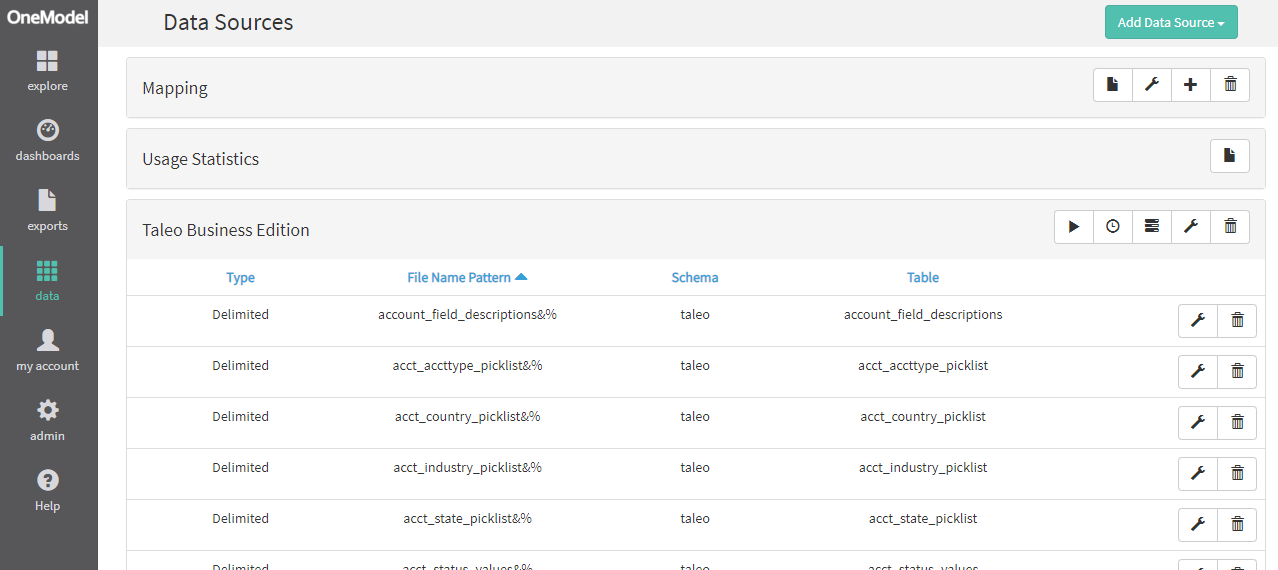

Model and Transform

We already had models for Taleo so the majority of effort here is in catering for the business logic to go from one system to another and any customer specific logic that needs to be built. This was our first time building towards a workday target schema so the bulk of time was spent here but this point to point model is now basically a template for re-use.

The below shows some of the actual data model transformations taking place and the intermediate and output tables that are being created in the process.

Validation and Data Quality

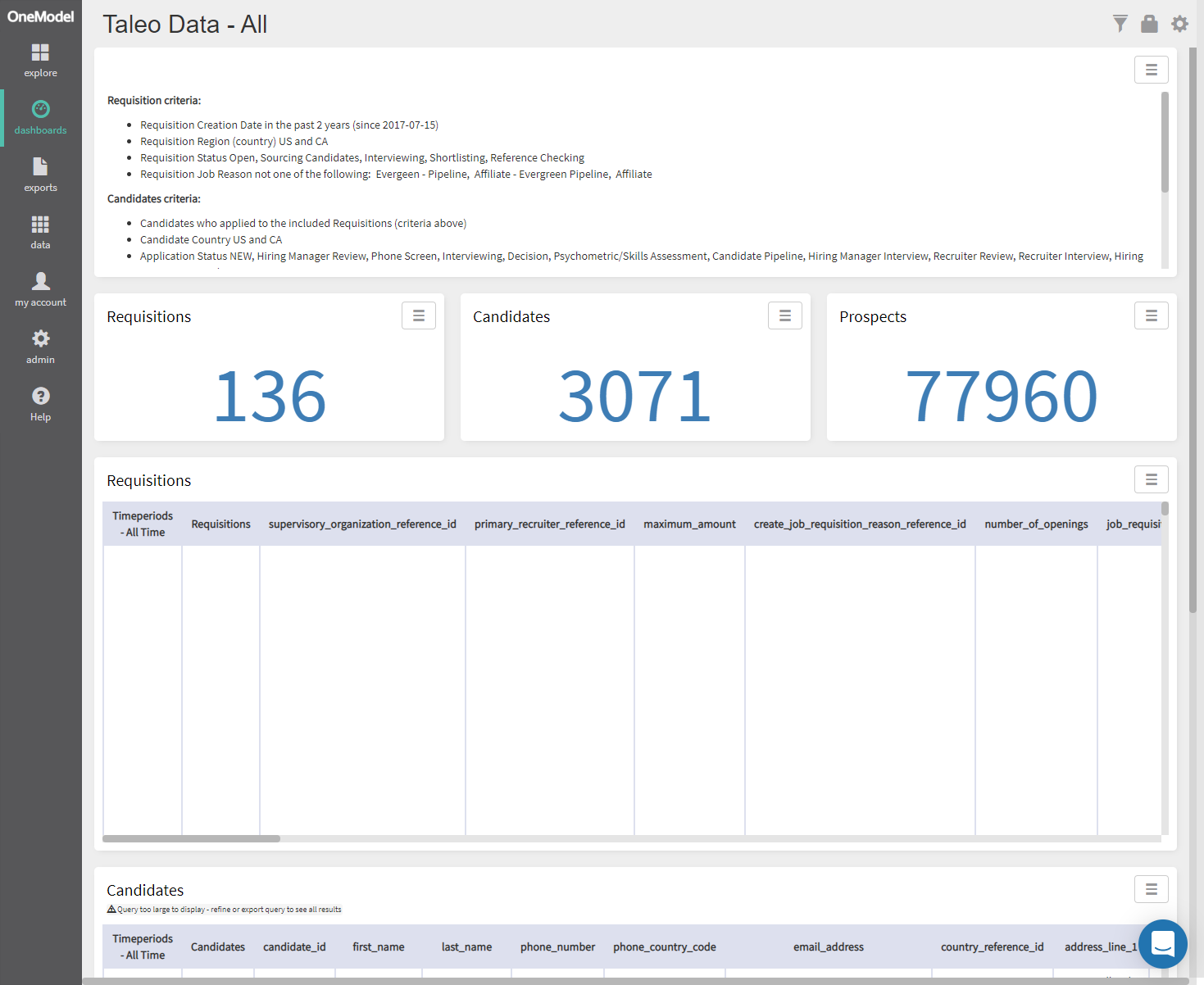

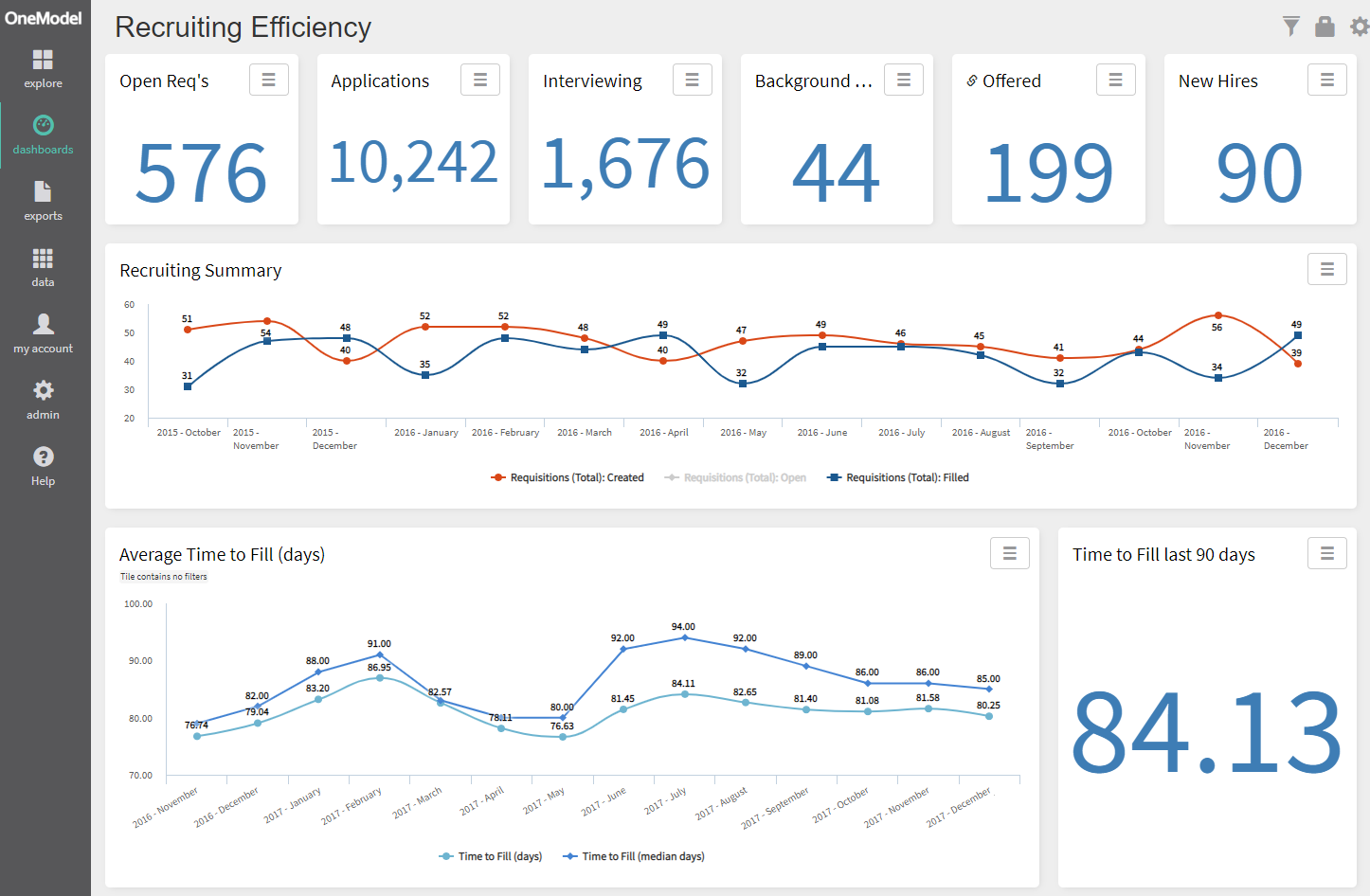

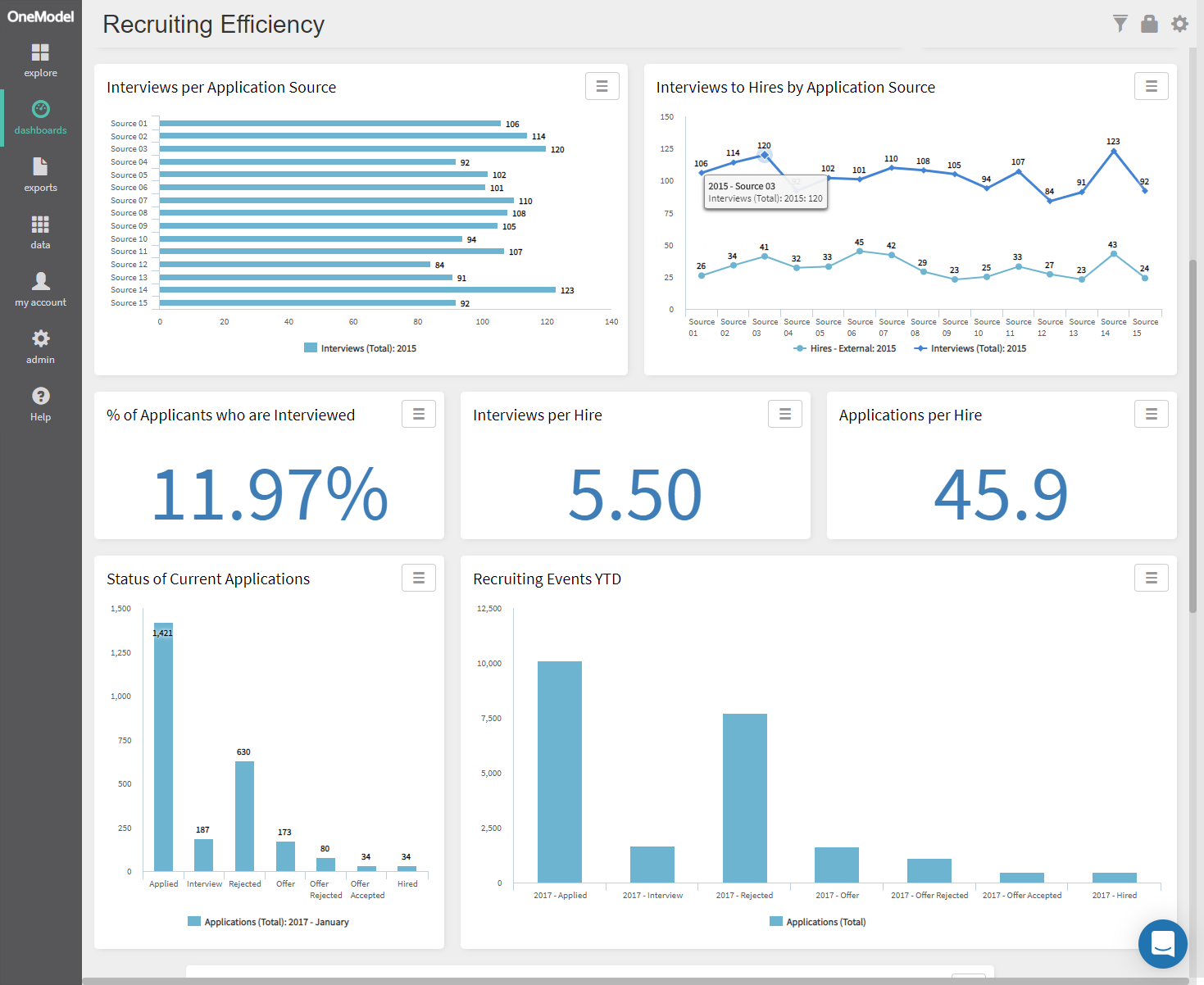

Obviously, we need to view the data for completeness and quality. A few dashboards give us the views we need to do so.

Analytics provides an ability to measure data and a window to drill through to validate that the numbers are accurate and as expected. If the system is still in use, filtering by time allows new data to be viewed or exported to provide incremental updates.

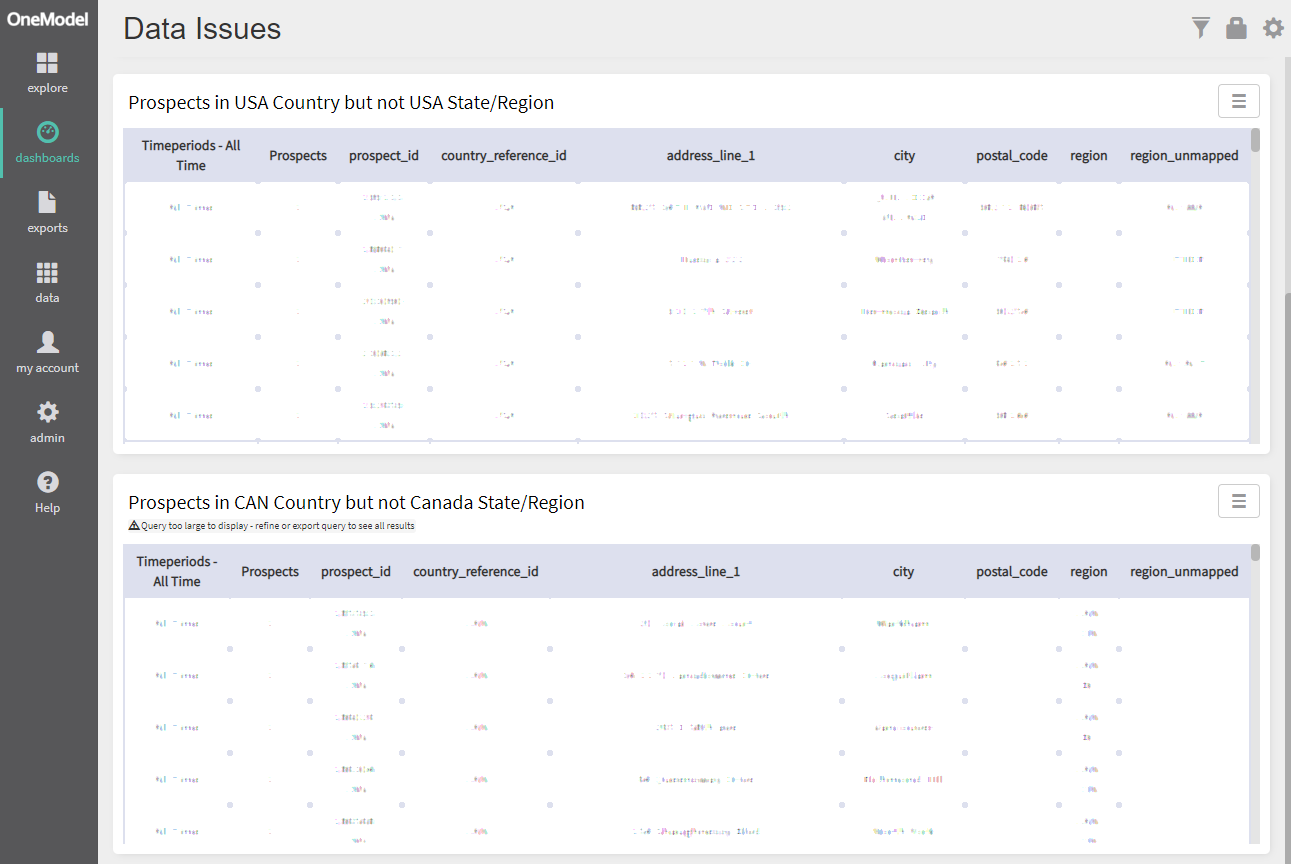

Data Quality is further addressed looking for each of the data scenarios that need to handled, these include items like missing values, and consistency checks across fields

Evaluate, Adjust, Repeat

It should be immediately apparent if there are problems with the data by viewing the dashboards and scenario lists. If data needs to be corrected at the source you do so and run a new extraction. Logic or data fills can be catered for in the transformation/modelling layers including bulk updates to fill any gaps or correct erroneous scenarios. As an automated process, you are not re-doing these tasks with every run - the manual effort is made once and infinitely repeated.

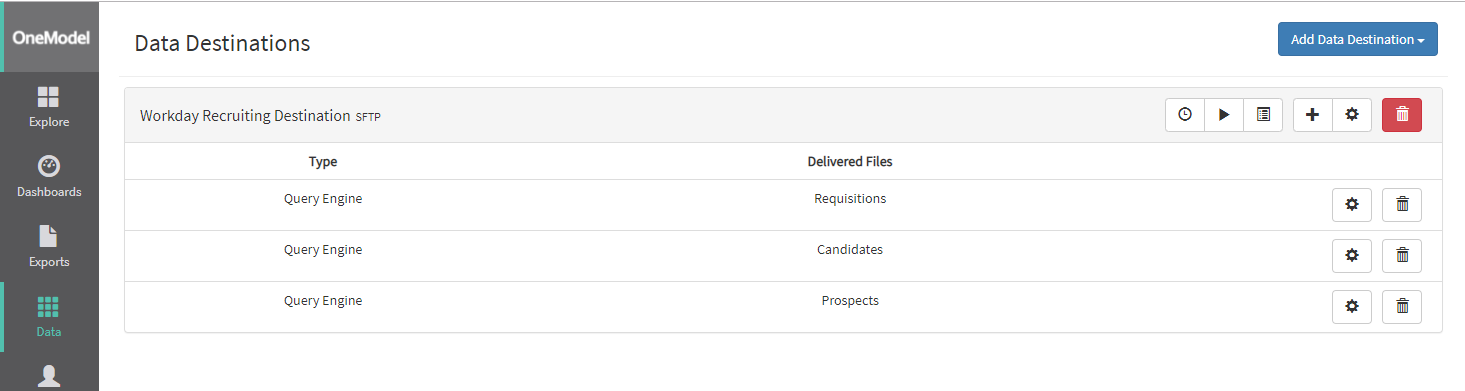

Load to the Target System

It's easy enough to take a table created here and download it as a file for loading into the target system but ideally you want to automate this step and push to the system's load facilities. In this fashion you can automate the entire process and replace or add to the data set that is in your new system even while the legacy application is still functioning and building data. On the cutover day you run a final process and you're done.

Validate the Target System Data

Of course, you need to validate the new system is correctly loaded and functioning so round-tripping the data back to the people analytics system will give you that oversight and the same data quality elements can be run against the new system. From here you can merge your legacy and new data sets and provide a continuous timeline for your reporting and analytics across systems as if they were always one and the same.

Level of Effort

We spent around 16-20 hours of technical time (excluding some soft skills time) to run the entire process to completion which included

- Building the required logic, target to destination models for the first time

- Multiple changes to the destination requirements as the external implementation consultant changed their requirements

- Dozens of end to end runs as data changed at the source and the destination load was validated

- Building a python program to extract resume files from TBE, this is now a repeatable program in our augmentations library.

That's not a lot of time, and we could now do the above much faster as the repeatable pieces are in place to move from Taleo Business Edition to Workday's Recruiting module. The same process can be followed for any system.

The Outcome?

"Colliers chose One Model as our data integration partner for the implementation of Workday Recruiting. They built out a tailored solution that would enable us to safely, securely and accurately transfer large files of complex data from our existing ATS to our new tool. They were highly flexible in their approach and very personable to deal with – accommodating a number of twists and turns in our project plan. I wouldn’t hesitate to engage them on future projects or to recommend them to other firms seeking a professional, yet friendly team of experts in data management." - Kerris Hougardy

Adopting new Innovation

We've used the same methods to power new vendors that customers have on-boarded. In short order, a comprehensive cross-system data set can be built and automatically pushed to the vendor enabling their service. Meanwhile the data from your old system is still held in the people analytics framework enabling you to merge the sets for historical reporting.

If you can more easily adopt new technology and move between technologies you mitigate the risks and costs of 'vendor lock-in'. I like to think of this outcome as creating an insurance policy for bad fit technology. If you know you can stand up a new technology quickly, then you can use it while you need it and move to something that fits better in the future without loss of your data history then you will be more likely to be able to test and adopt new innovation.

Being able to choose the right technology at the right time is crucial for advancing our use of technology and ideally creating greater impact for our organization and employees.

Our Advice for Organizations Planning for an HR System Migration

- Get a handle and view across your data first -- if you are already reporting and delivering analytics on these systems you have a much better handle on the data and it's quality than if you didn't.

- The data is often not as bad as you expect it to be and cleaning up with repeatable logic is much better than infrequently extracting and running manual cleansing routines.

- You could save a huge amount of time in the migration process and use more internal resources to do what you are paying an external implementation consultant to deliver.

- Focus more time on the differences between the systems and what you need to cater for to align the data to the new system.

A properly constructed people analytics infrastructure is a system agnostic HR Data Strategy and is able to deliver more than just insight to your people. We need to think about our people data differently and take ownership for it external to the transactional vendor, when we do so we realize a level of value, flexibility and ability to adopt innovation that will drive the next phase of people analytics results while supporting HR and the business in improving the employee experience.